The Second Law of Thermodynamics: Entropy, Heat, and the Arrow of Time

The Most Profound Law in Physics

Of all the laws of physics, the second law of thermodynamics is arguably the one with the deepest implications. Newton’s laws tell you how things move. Maxwell’s equations tell you how electromagnetic fields behave. The second law tells you something more fundamental: it tells you the direction time flows.

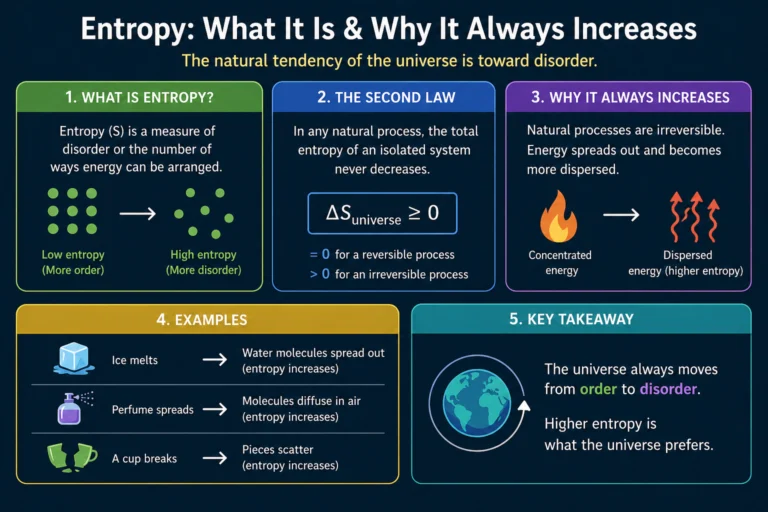

It explains why ice melts but cold water doesn’t spontaneously freeze on a warm day. Why a drop of ink spreads through water but never spontaneously gathers back into a drop. Why heat flows from a hot cup of coffee into the cool air of your room, never the other way around. Why every engine wastes some energy as heat no matter how well it’s designed. All of these are consequences of a single profound principle.

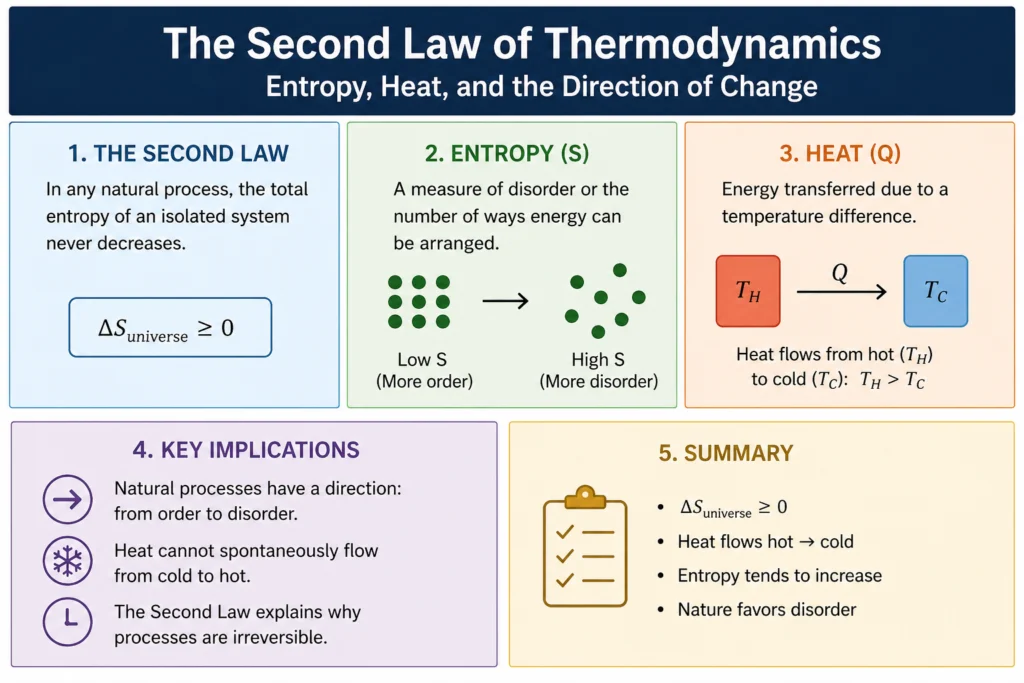

The second law of thermodynamics states that the total entropy of an isolated system never decreases over time. It either increases or, in a perfectly reversible process, stays the same. It always goes up or stays flat. Never down. That’s the law, and it applies to everything in the universe without exception.

What Is Entropy, Really?

It is one of the most misunderstood concepts in physics. It’s often described loosely as disorder or randomness, which is not wrong but is incomplete and can be misleading. A more precise way to think about it is this: It measures the number of microscopic arrangements of a system that are consistent with its macroscopic properties.

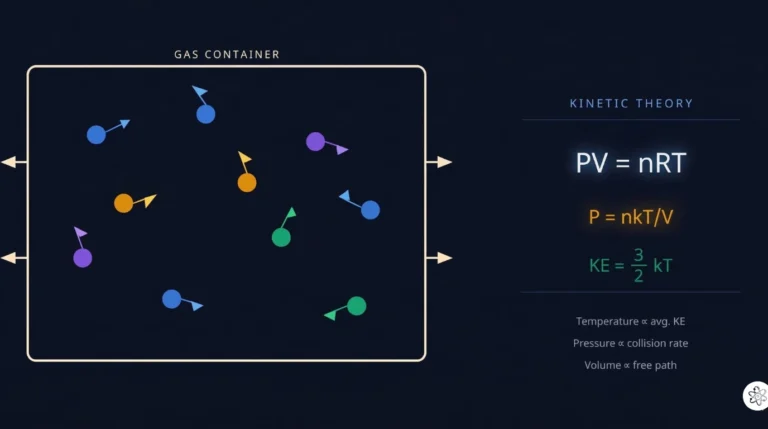

Consider a box of gas molecules. The macroscopic properties you can measure are things like total pressure, total volume, and total temperature. But underlying these bulk properties are the positions and velocities of billions of individual molecules. There are an enormous number of different arrangements of those molecules that all give the same pressure, volume, and temperature. Entropy is related to how many such arrangements are possible.

A state with high entropy has many possible microscopic arrangements. A state with low It has few. When a system evolves over time, it naturally tends toward states with more possible arrangements, simply because there are more of them to land in. It’s statistics, not magic.

The mathematical relationship is given by Boltzmann’s formula:

S = k x ln(W)

Where S is entropy, k is Boltzmann’s constant (1.38 x 10^-23 J/K), and W is the number of microscopic arrangements corresponding to the macroscopic state. This equation is so important that it is carved on Ludwig Boltzmann’s tombstone in Vienna.

For a macroscopic system with something like 10^23 molecules, the number of arrangements W is so astronomically large that the probability of spontaneously observing a decrease in entropy is not just unlikely but effectively zero. Not forbidden by any fundamental law at the level of individual molecules, just so fantastically improbable that it never happens in practice.

Simulations

Heat Flow and the Second Law

One of the most direct consequences of the second law is that heat naturally flows from hot objects to cold objects, never the other way around spontaneously. You never see a cold object spontaneously getting colder while the warm object next to it gets warmer. But why?

When heat flows from a hot object to a cold one, the total entropy of the system increases. The hot object loses some entropy when it loses heat energy, but the cold object gains more entropy than the hot object lost, because at lower temperatures the same amount of heat energy corresponds to more possible microscopic arrangements. The net entropy change is positive, which is exactly what the second law requires.

If heat were to flow from cold to hot spontaneously, the net entropy change would be negative. The total entropy of the system would decrease. This is precisely what the second law forbids.

Refrigerators and air conditioners do move heat from cold to warm, but they don’t violate the second law because they require external work to do so. That external work increases the entropy of the surroundings (via the electricity generated by burning fuel or by nuclear power) by more than the entropy decrease inside the refrigerator. Total entropy of the universe still increases.

The Carnot Efficiency and Why No Engine Is Perfect

Every heat engine, from a steam engine to a jet turbine to an internal combustion engine, works by taking in heat from a hot reservoir, converting some of it to useful work, and dumping the rest as waste heat into a cold reservoir. The second law sets an absolute upper limit on how efficiently any heat engine can operate.

In 1824, French engineer Sadi Carnot proved that the maximum possible efficiency of any heat engine operating between a hot reservoir at temperature T hot and a cold reservoir at temperature T cold is:

Efficiency maximum = 1 minus (T cold / T hot)

Where temperatures are in kelvin. This is the Carnot efficiency. A perfectly efficient engine would convert all input heat to work with no waste heat, which would require T cold = 0 kelvin, absolute zero. Since absolute zero is unattainable (third law of thermodynamics), no real engine can be 100% efficient. Ever.

A coal power plant typically operates with steam at about 600 kelvin and exhausts to the environment at about 300 kelvin, giving a Carnot efficiency of 1 minus 300/600 = 50%. Real plants achieve about 35 to 40% efficiency due to additional irreversibilities. The waste heat is not a design failure. It is a fundamental consequence of the second law of thermodynamics.

This is also why perpetual motion machines of the second kind, devices that convert heat entirely to work with no waste, are impossible. Many inventors have claimed to have built such devices. None have ever worked when properly tested, and the second law of thermodynamics tells us why none ever will.

The Arrow of Time

Here is where the second law gets philosophically deep. All the fundamental laws of physics at the microscopic level are time-symmetric. The equations of quantum mechanics, electromagnetism, and even general relativity look the same whether time runs forward or backward. A video of two billiard balls colliding looks physically valid whether played forward or backward.

But the second law is not time-symmetric. It increases toward the future and would decrease toward the past if time ran backward. This asymmetry gives time its direction, the arrow of time. The past is the direction of lower entropy. The future is the direction of higher entropy.

When you watch a video of an egg breaking and then spontaneously reassembling, you immediately know it’s running backward. Why? Because a broken egg has higher entropy than an intact one. Reassembly would require a decrease , which the second law forbids. Your perception of time’s direction is directly connected to the statistical tendency of entropy to increase.

The Austrian physicist Ludwig Boltzmann spent much of his career developing the statistical mechanical explanation for entropy and the second law. He faced fierce opposition from physicists who believed atoms were not real and who resisted his statistical interpretation. He died by suicide in 1906, never knowing that his ideas would be vindicated within years by Einstein’s explanation of Brownian motion, which provided direct evidence for atoms. Today Boltzmann is recognized as one of the founding figures of statistical mechanics.

Example in Everyday Life

Once you understand entropy, you start seeing it everywhere.

Your bedroom gets messy over time and never spontaneously tidies itself. Low entropy (everything in its place) evolves toward high entropy (random distribution of objects) without effort, and restoring order requires work.

Mixed gases never spontaneously separate. A bottle of air doesn’t spontaneously sort itself into pure nitrogen on one side and pure oxygen on the other. Unmixing would reduce entropy.

Biological organisms maintain low internal entropy by consuming energy and expelling waste heat, increasing the entropy of their surroundings more than they decrease their own. Life doesn’t violate the second law. It works within it, using free energy from the Sun or from food to maintain order locally while increasing total entropy globally.

Information theory, developed by Claude Shannon in 1948, uses a concept of entropy mathematically identical to Boltzmann’s thermodynamic entropy. The entropy of a message measures how much information it contains. A highly ordered, predictable message has low information entropy. A random, unpredictable message has high information entropy. The connection between physical entropy and information entropy is deep and still not fully understood.

Frequently Asked Questions

What does the second law of thermodynamics state?

The second law states that the total entropy of an isolated system never decreases over time. In any spontaneous process, it either increases or, in a perfectly reversible process, remains constant. Entropy of the universe as a whole always increases.

What is entropy in simple terms?

It is a measure of how many microscopic arrangements of a system’s particles are consistent with its observed macroscopic properties. Systems tend to evolve toward states with more possible arrangements, which means higher entropy, simply because there are statistically more ways to be in those states.

Why can’t heat flow from cold to hot spontaneously?

Because such a flow would decrease the total entropy of the system. At lower temperatures the same amount of heat corresponds to more microscopic arrangements and therefore more entropy. Heat flowing from cold to hot would produce a net entropy decrease, which the second law forbids.

Why is no heat engine 100% efficient?

Because converting all heat to work with zero waste heat would require the cold reservoir to be at absolute zero temperature, which is physically impossible according to the third law of thermodynamics. The Carnot efficiency equation shows that maximum efficiency is always less than 100% for any finite temperature difference.

What is the arrow of time?

The arrow of time is the observation that time has a preferred direction, from past to future, even though the fundamental laws of physics at the microscopic level are time-symmetric. The second law gives time its direction because entropy increases toward the future and would have to decrease toward the past, which is statistically impossible on a macroscopic scale.

Does life violate the second law?

No. Living organisms maintain internal order by consuming free energy from food or sunlight and releasing waste heat, which increases the entropy of their surroundings. The total entropy of organism plus surroundings always increases. Life operates within the second law, not in violation of it.

Articles from same category

- kinetic theory of gases

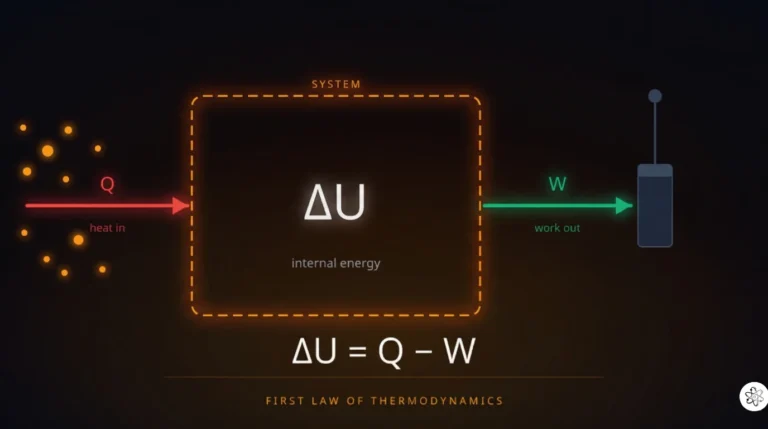

- First Law of Thermodynamics

- What is Energy

- Second Law of Thermodynamics

Frequently Asked Questions

Get physics insights delivered weekly

Join students and educators receiving expert explanations, study tips, and platform updates every Thursday.

Join others. No spam.

allphysicsfundamentals

Making physics accessible, interactive, and genuinely understandable for students at every level.

Learn

- All Articles

- Classical Mechanics

- Thermodynamics

- Waves & Optics

- Electromagnetism

- Modern Physics