Entropy Explained: What It Is & Why It Always Increases

Entropy Is Not What Most People Think

Ask most people what entropy means and they’ll say something like disorder or chaos. That’s not wrong exactly, but it’s not precise enough to be useful in physics. And imprecision here leads to real confusion, because it has a specific mathematical definition with specific physical consequences that the word disorder doesn’t fully capture.

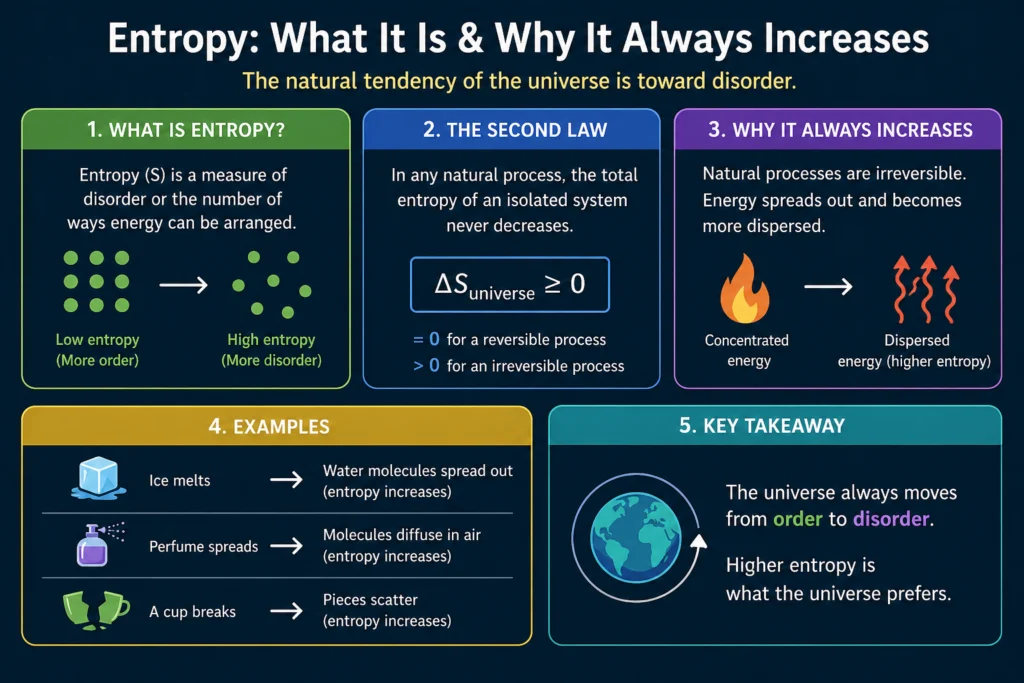

Here’s a better starting point. it is a measure of how many ways a system can be arranged at the microscopic level while still looking the same at the macroscopic level. That’s it. The more ways there are, the higher the entropy. Systems naturally evolve toward higher entropy states not because of any mysterious force but because there are simply more high-entropy states to end up in than low-entropy ones. It’s pure statistics.

Once you really understand this one idea, everything else about entropy, why it increases, why heat flows the way it does, why time has a direction, why no engine is perfectly efficient, all of it follows naturally.

Macrostates and Microstates

To understand it properly you need two concepts: macrostates and microstates.

A macrostate is what you can observe and measure about a system using bulk quantities: temperature, pressure, volume, total energy. When you say a gas is at 300 kelvin and 1 atmosphere of pressure, you’re describing its macrostate.

A microstate is a specific configuration of all the individual particles in the system: every molecule’s position and velocity at one instant. For a gas with 10^23 molecules, each microstate specifies a mind-boggling amount of detail that you could never measure in practice.

The key point is that many different microstates correspond to the same macrostate. If you shuffle all the gas molecules into slightly different positions and velocities, the bulk temperature and pressure don’t change. The macrostate looks identical even though the microstate is different.

It is related to how many microstates correspond to a given macrostate. A macrostate with a huge number of corresponding microstates has high entropy. A macrostate with few corresponding microstates has low entropy.

Boltzmann’s Formula

Ludwig Boltzmann formalized this relationship in one of the most important equations in all of physics:

S = k x ln(W)

Where S is the entropy of the system in joules per kelvin, k is Boltzmann’s constant equal to 1.38 x 10^-23 J/K, ln is the natural logarithm, and W is the number of microstates corresponding to the current macrostate.

The logarithm is there for practical reasons. W for a macroscopic system is an astronomically large number, something like 10 raised to the power of 10^23. The logarithm brings this down to a manageable scale.

This formula tells you that it is essentially a way of counting microscopic possibilities. Double the number of possible microstates and it increases by k x ln(2). Square the number of microstates and it doubles. The more ways a system can be arranged, the higher its entropy.

Boltzmann’s equation is so fundamental that it is inscribed on his tombstone in the Central Cemetery in Vienna. He spent years fighting for acceptance of this statistical interpretation of it and the atomic theory of matter. The opposition he faced contributed to his declining mental health. He took his own life in 1906, just years before experiments confirmed atoms were real. It remains one of science’s great tragedies.

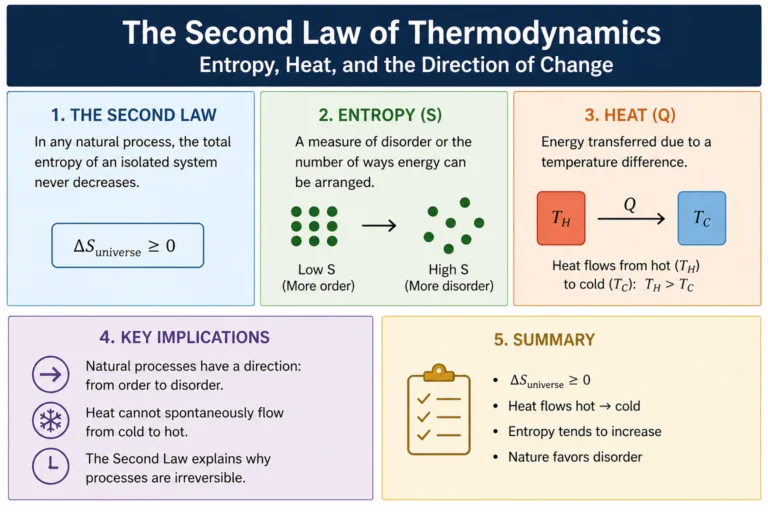

Why Entropy Always Increases

The second law of thermodynamics says entropy of an isolated system never decreases. But why? Is it a law in the same sense as Newton’s laws, written into the fundamental equations of physics?

Not exactly. At the level of individual particle interactions, physics is time-symmetric. There’s no microscopic law that says it must increase. In principle, the molecules of a gas could spontaneously organize themselves into a low-entropy configuration. Nothing in the fundamental laws forbids it.

What forbids it in practice is statistics. Consider a simple example. You have a box divided into two halves. Put all the gas molecules on the left side. That’s a highly ordered, low entropy state. Now remove the divider. The molecules spread throughout the whole box. Why? Not because some force pushes them right. But because the number of microstates with molecules spread throughout the whole box is vastly larger than the number with all molecules on the left. If the molecules bounce around randomly, they’re overwhelmingly likely to end up in a high-entropy state simply because there are so many more of those states to be in.

For a system with just 100 molecules, the probability of all of them spontaneously ending up in the left half is (1/2)^100, which is about 10^-30. For a real gas with 10^23 molecules the probability is so small it’s effectively zero for any practical purpose. It’s not that it can’t happen. It’s that you’d have to wait longer than the age of the universe many times over.

This statistical explanation of the second law was Boltzmann’s great insight. It increases because high-entropy states are overwhelmingly more probable than low-entropy ones.

Entropy and Temperature

The thermodynamic definition of entropy change is:

delta S = Q / T

Where delta S is the change in entropy, Q is the heat added to the system in joules, and T is the absolute temperature in kelvin at which the heat transfer occurs.

This definition shows why heat transfer at low temperature produces more entropy change than the same heat transfer at high temperature. At low temperatures, molecules have less energy to start with, so adding a given amount of heat energy creates a relatively larger spread of possible new microscopic arrangements. At high temperatures, molecules already have lots of energy and are already spread across many states, so the same heat addition creates a smaller proportional increase in the number of available microstates.

This asymmetry is exactly why heat spontaneously flows from hot to cold. When heat flows from a hot object at temperature T hot to a cold object at temperature T cold, the entropy decrease of the hot object (Q/T hot) is smaller than the entropy increase of the cold object (Q/T cold), because T cold is less than T hot. The net entropy change is positive. Total entropy increases. The process is spontaneous.

If heat were to flow from cold to hot, the math reverses. It decrease of the cold object would exceed the entropy increase of the hot object, giving a net negative entropy change. The second law forbids this.

Free Energy: Entropy’s Practical Partner

In chemistry and engineering, it’s often more useful to work with free energy than with entropy directly. Free energy combines entropy with internal energy to give a single quantity that tells you whether a process will occur spontaneously under given conditions.

The Gibbs free energy is defined as:

G = H minus T x S

Where H is the enthalpy (a measure of total heat content), T is absolute temperature, and S is entropy. A process occurs spontaneously at constant temperature and pressure if delta G is negative, meaning the Gibbs free energy decreases.

A process can be spontaneous even if it decreases entropy (delta S negative) as long as it releases enough heat energy (negative delta H) to compensate. And a process can be spontaneous even if it absorbs heat (positive delta H) if the it increase is large enough.

Dissolving salt in water is a good example. The process absorbs a small amount of heat from the surroundings (slightly endothermic) but is spontaneous because the it increase of mixing ions throughout the water outweighs the energy cost. The Gibbs free energy decreases overall.

Entropy and Information Theory

In 1948, American mathematician Claude Shannon was developing a mathematical theory of communication and information transmission. He needed a way to quantify how much information a message contains. The measure he developed, which he called information entropy, turned out to be mathematically identical in form to Boltzmann’s thermodynamic entropy.

Shannon’s entropy for a probability distribution:

H = minus sum of (p x log p) for all possible outcomes

Where p is the probability of each outcome. This has exactly the same mathematical structure as Boltzmann’s entropy. It’s not a coincidence. Information and physical entropy are deeply connected.

A perfectly predictable message, one you could completely reconstruct without receiving it, carries no information. Its entropy is zero because there’s only one possibility. A perfectly random message, where every symbol is equally likely and independent of all others, carries maximum information. Its entropy is maximum because all possibilities are equally probable.

The connection between information and thermodynamics has deep implications. Erasing a bit of information, resetting a memory bit from an unknown state to zero, necessarily dissipates at least kT x ln(2) of energy as heat. This is Landauer’s principle, proposed by Rolf Landauer in 1961 and experimentally confirmed in 2012. Information is not just an abstract mathematical concept. It has physical substance and thermodynamic consequences.

Maxwell’s Demon

In 1867, James Clerk Maxwell proposed a famous thought experiment that seemed to challenge the second law. Imagine a box of gas divided into two halves by a partition. A tiny intelligent being, later called Maxwell’s demon, sits at a tiny door in the partition. When a fast molecule approaches from the right, the demon opens the door and lets it through to the left. When a slow molecule approaches from the left, the demon opens the door and lets it through to the right.

After a while, all the fast molecules are on the left and all the slow ones are on the right. The left side has gotten hotter and the right side has gotten cooler, without any work being done. The demon has apparently decreased the entropy of the gas and violated the second law.

The resolution came nearly a century later through the work of Leo Szilard and later Rolf Landauer. The demon must measure each molecule’s speed to decide whether to open the door. That measurement stores one bit of information in the demon’s memory. When the demon eventually erases that information, which it must do to avoid its memory filling up, the erasure dissipates heat into the environment. It decrease in the gas is exactly compensated by the entropy increase from erasing the demon’s memory. The second law survives.

Maxwell’s demon shows that information and thermodynamics are not just analogous. They are the same thing at a fundamental level.

Entropy in the Universe

The universe started in an extremely low entropy state at the Big Bang. All matter and energy was compressed into an incredibly hot, dense, but remarkably smooth and uniform state. Smooth and uniform sounds like high entropy, but gravitationally it was actually low entropy. Gravity makes clumping the preferred state, so a smooth uniform distribution of matter is low-entropy from a gravitational perspective.

As the universe evolved, gravity caused matter to clump into stars and galaxies. Stars burn nuclear fuel, increasing entropy. When stars die they produce white dwarfs, neutron stars, or black holes. Black holes have the highest entropy of any known object in the universe. A black hole’s entropy is proportional to the area of its event horizon, not its volume, a result Stephen Hawking derived in 1972 that remains one of the deepest results in theoretical physics.

The universe’s entropy has been increasing ever since the Big Bang and will continue to do so. The ultimate fate of the universe, according to thermodynamics, is a state of maximum entropy sometimes called the heat death of the universe, where all temperature differences have equalized, all free energy is exhausted, and no further work can be extracted from any process. This would be a state of perfect thermodynamic equilibrium with no gradients, no structure, no complexity, and nothing happening. The timeline for this is mind-bendingly long, many orders of magnitude longer than the current age of the universe.

Frequently Asked Questions

What is entropy in simple terms?

It is a measure of how many microscopic arrangements of a system’s particles are consistent with what you can observe at the macroscopic level. The more arrangements are possible, the higher the entropy. Systems spontaneously evolve toward higher entropy states because there are statistically more high-entropy states to end up in.

Why does entropy always increase?

It increases because high-entropy states have vastly more corresponding microscopic arrangements than low-entropy states. When a system evolves randomly at the molecular level, it’s overwhelmingly likely to move toward states with more arrangements, which means higher entropy. It’s not a force or a law in the fundamental sense. It’s pure statistics applied to enormous numbers of particles.

What is Boltzmann’s entropy formula?

S = k x ln(W), where S is entropy, k is Boltzmann’s constant (1.38 x 10^-23 J/K), and W is the number of microscopic arrangements corresponding to the system’s macroscopic state. This formula connects the microscopic statistical picture of entropy with the macroscopic thermodynamic one.

How is entropy related to information?

Claude Shannon showed in 1948 that information entropy, a measure of how unpredictable or information-rich a message is, has the same mathematical form as Boltzmann’s thermodynamic entropy. The connection is physical as well as mathematical: erasing information necessarily dissipates heat, as shown by Landauer’s principle, confirmed experimentally in 2012.

What is the heat death of the universe?

The heat death is the theoretical ultimate fate of the universe, a state of maximum entropy where all temperature differences have equalized, no free energy remains, and no further physical processes can occur. It’s a state of perfect thermodynamic equilibrium. The timeline is extraordinarily long, far beyond the current age of the universe.

Can entropy ever decrease?

In an isolated system it never decreases. In an open system that exchanges energy with its surroundings, local it can decrease as long as the entropy of the surroundings increases by at least as much. This is how refrigerators work and how life maintains internal order. Total entropy of system plus surroundings always increases or stays the same.

Articles from same category

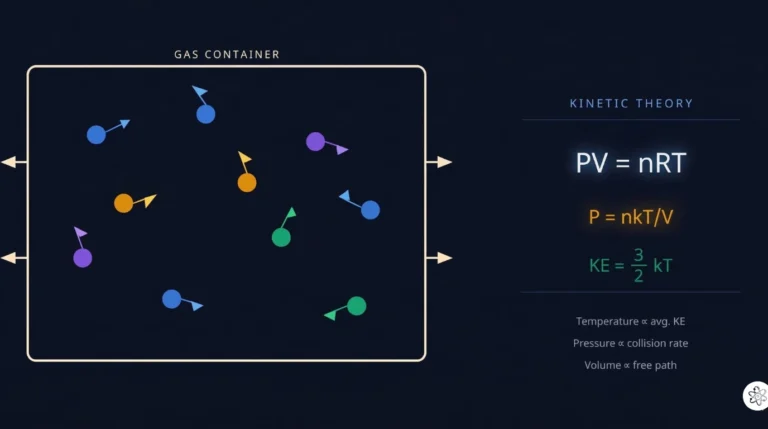

- kinetic theory of gases

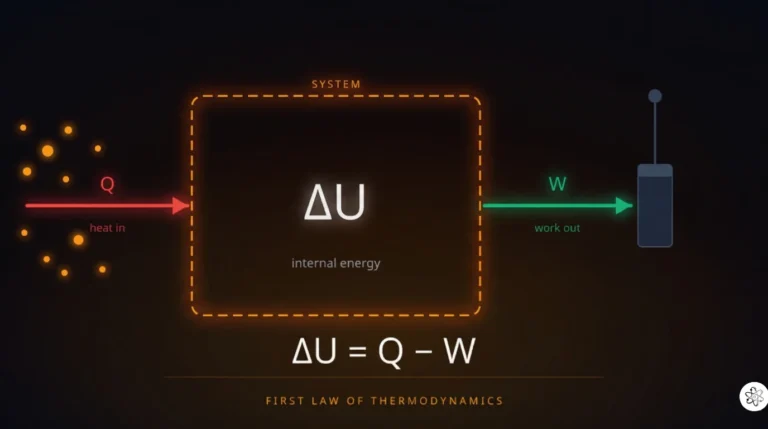

- First Law of Thermodynamics

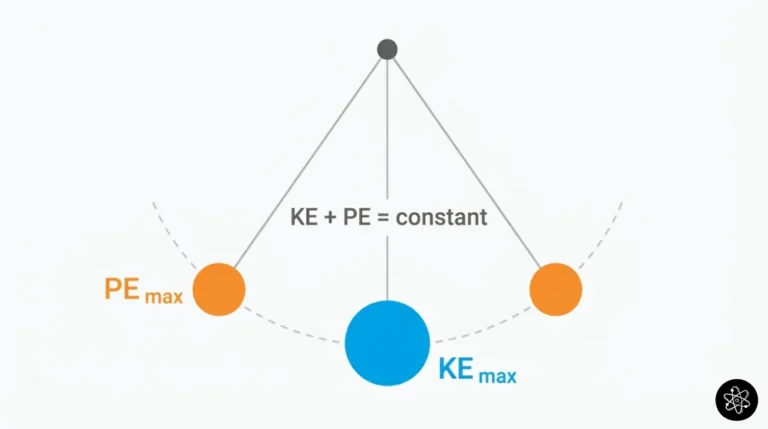

- What is Energy

- Second Law of Thermodynamics

- Entropy Explained

Frequently Asked Questions

Get physics insights delivered weekly

Join students and educators receiving expert explanations, study tips, and platform updates every Thursday.

Join others. No spam.

allphysicsfundamentals

Making physics accessible, interactive, and genuinely understandable for students at every level.